Move over Sophia you might have company. At Tesla’s AI Day, Elon Musk said the company plans to build a robot in human form, leveraging some of its vehicle technology. The path of humanoid robots much like everything else, will go in two possible directions. Constructively, care robots, companion robots, and those that handle difficult repetitive tasks, help address mounting challenges as well as long-standing ones. On the destructive side, these robots may someday encroach upon those traits that make us distinctly human. Our path forward continues to represent a balancing act. Elon Musk describes his vision in the video below.

Elon Musk

How Close Are We To Autonomous Driving?

I’m extremely confident that level 5 [self-driving cars] or essentially complete autonomy will happen, and I think it will happen very quickly. I remain confident that we will have the basic functionality for level 5 autonomy complete this year.

Elon Musk

The question of full autonomy goes back to 2014. There was a time when leaders across industry focused on the disruptive potential of autonomous vehicles. Here in 2021, those disruptive scenarios have not emerged on the timeline many expected. So, how close are we really to level five autonomous driving? That quote above provides one man’s opinion. Granted, that opinion comes from Elon Musk, a person that has made technology history for decades. As this article via Nick Hobson describes, vehicles that have achieved level five autonomy can drive in all circumstances, removing the need for a steering wheel and driver’s seat. Many experts believe reaching level five autonomy is next to impossible. Those beliefs stem from the complexity of the human mind, and the intuition we use in decision making.

Continue readingThe Third Tipping Point

I have invested considerable time exploring the tipping points in human history. When I say tipping point, I mean a fundamental change in the nature of being human. As described in my Post on the topic, there were two main tipping points in human history: from hunter-gatherer to agriculture, and agriculture to our industrial society.

Possible Futures: Exploring Deepfake and Neuralink

Two recent articles highlight the dilemma faced in this era of rapid innovation: the potential to enhance humanity, and the opportunity to diminish it. This article on DeepFakes describes the challenge that society will face as Deepfake video and audio make it impossible to tell the difference between reality and fiction. Audio attacks using convincing forgeries can send stocks plummeting or soaring. How about mimicking a CEO’s voice to request a senior financial officer to transfer money? These are real examples provided by Symantec. This short Video describes the money transfer scenario.

Autonomous Vehicles and the Perils of Prediction

I am a big believer in rehearsing the future versus attempting to predict it. The wild swings we experience when following future scenarios can range from bold predictions of imminent manifestation to dire warnings that a scenario will never be realized. In this Recent Article, the author describes how the auto industry is rethinking the timetable to realizing level 5 autonomy. Turns out we underestimate the human intelligence required to drive a car and overestimate our ability to replicate it. The article provides simple examples:

When a piece of cardboard blows across a roadway 200 yards ahead, for example, human drivers quickly determine whether they should run over it or veer around it. Not so for a machine. Is it a piece of metal? Is it heavy or light? Does a machine even “know” that a heavy chunk of metal doesn’t blow across the roadway? It’s a tougher problem.

Or how about this challenge that humans for the most part handle very well:

When a car arrives at a four-way stop at the same time as another vehicle, for example, it’s a dilemma for a machine. Human drivers tend to nod or make eye contact, but micro-controllers can’t do that.

Life 3.0: Being Human in the Age of Artificial Intelligence

I just added another very good book to the Book Library: Life 3.0: Being Human in the Age of Artificial Intelligence – A New York Times Best Seller. Author Max Tegmark takes a fascinating journey through possible AI futures. His physics oriented perspective provides an interesting point of view, as humanity wrestles with the ultimate path of artificial intelligence.

Mr. Tegmark tackles the discussion around how much machines will encroach on human domains, by illustrating a metaphor from Hans Moravec:

The Fourth Age

Byron Reese recently authored a book titled The Fourth Age. I thoroughly enjoyed this fascinating look at history, and the focus on possible futures. In looking at the future, Mr. Reese explores the reasons that experts disagree on the path of these possible futures. He asks: why do Elon Musk, Stephen Hawking, and Bill Gates fear artificial intelligence (AI) and express concern that it may be a threat to humanity’s survival; and yet, why do an equally illustrious group, including Mark Zuckerberg, Andrew Ng, and Pedro Domingos, find this viewpoint so far-fetched as to be hardly even worth a rebuttal? The answer as described by the author lies not in what we know – but what we individually belief. This theme throughout the book is an interesting piece of self-reflection. See how you would answer the questions posed by the author.

What are your thoughts about the Future?

I had the pleasure of developing an online thought leadership course focused on our emerging future back in May of this year. I had the added pleasure of working with futurists Gerd Leonhard, Gray Scott, and Chunka Mui, along with several industry leaders. The free Thought Leadership Course is available through May of next year. The course has been invaluable to me, as it provided a platform for dialog about our emerging future. I was thrilled with the thought provoking dialog that occurred through our moderated forum. For all those that participated thank you.

During the two week course, several poll questions were positioned to help us understand how the community is feeling about critical topics like ethics, our economy, and the likely transformative period that lies ahead. Here are the questions and their responses. There is plenty of time to take the course and add your voice to the conversation.

Technology and Ethics

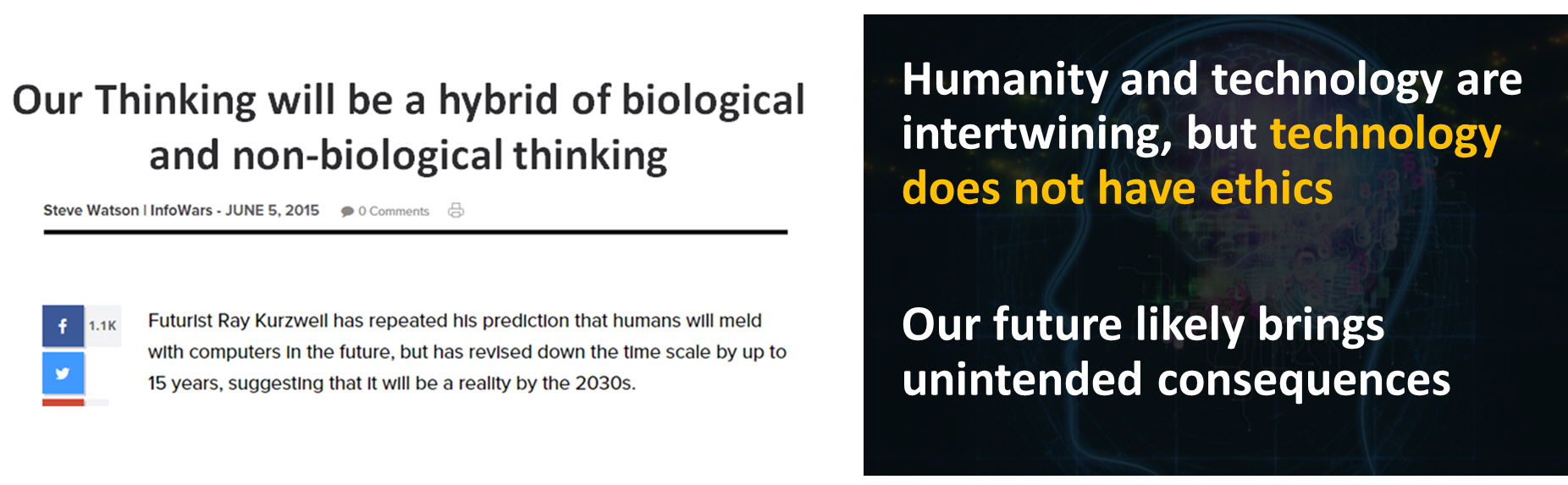

Some in the Futurist community are focused on technology and ethics. Gerd Leonhard has been particularly vocal on the topic. I’ve dedicated a section of my keynote to what I believe will be a growing dialog. I use this slide to pose a question to the audience:

The example provided above comes from Ray Kurzweil, famous Futurist, Inventor and author. In an appearance at last years Exponential Finance conference, Kurzweil said this:

“Our thinking will be a hybrid of biological and non-biological thinking. We’re going to gradually merge and enhance ourselves. In my view, that’s the nature of being human – we transcend our limitations. We’ll be able to extend (our limitations) and think in the cloud. We’re going to put gateways to the cloud in our brains.”